Juho Kim is an Associate Professor in the School of Computing at KAIST, and directs KIXLAB (the KAIST Interaction Lab). He is also affiliated faculty in the Kim Jaechul Graduate School of AI at KAIST. His research in human-computer interaction and human-AI interaction focuses on building interactive and intelligent systems that support interaction at scale, aiming to improve the ways people learn, collaborate, discuss, make decisions, and take action online.

He earned his Ph.D. from MIT, M.S. from Stanford University, and B.S. from Seoul National University. In 2015-2016, he was a Visiting Assistant Professor and a Brown Fellow at Stanford University. He is a recipient of a KIISE/IEEE-CS Young Computer Researcher Award, KAIST's Songam Distinguished Research Award, Grand Prize in Creative Teaching, Q-Day Creative Education Award, and Excellence in Teaching Award, as well as nearly 20 paper awards from ACM CHI, ACM CSCW, ACM Learning at Scale, ACM IUI, ACM DIS, and AAAI HCOMP. He recently spent his sabbatical year as a chief scientist at Ringle Inc. to transfer his research on AI-powered analyis and diagnosis of English learners' proficiency into a real product. He gave a keynote at NeurIPS 2022 titled "Interaction-Centric AI".

If you're interested in working with me at KAIST, please read this page. Also, in this interview video for HCI Korea, I summarize KIXLAB's representative projects and share my thoughts on research and mentoring. English transcription is available.

Video of my recent talks on "Interaction-Centric AI": (1) NeurIPS 2022 keynote (targeted at AI audience), (2) Stanford HCI Seminar (targeted at HCI audience)

Schedule

Office hours: by appointment

N1 Room 605, KAIST

291 Daehak-ro, Yuseong-gu

Daejeon, Korea 34141

Tel: (+82)-042-350-3570

- 2024.10.14: KAIST Software Graduate Program. Colloquium Speaker.

- 2024.10.11: Multicampus 2025 HRD Seminar. Invited Speaker.

- 2024.09.25: Ubob Smart Learning Insight Forum. Invited Speaker.

- 2024.06.17-21: CVPR 2024. Invited Speaker at "Learning from Procedural Videos and Language: What is Next?" workshop.

- 2023.05.30: SNU EE. Guest Lecturer.

- 2024.05.11-16: CHI 2024. DC mentor, 8 full papers, 6 workshop papers.

- 2023.04.19: Korean Neuropsychiatric Association (KNPA) Annual Meeting. Invited Speaker.

- 2023.04.19: Software Convergence Symposium (SWCS) 2024. Invited Speaker.

- 2023.04.16: KEPCO. Invited Speaker.

- 2023.04.16: Daejeon Education & Science Research Institute. Invited Speaker.

- 2024.04.03: Institute of Social Sciences, Seoul National University. Invited Speaker.

- 2024.01.08: Samsung Electronics. Invited Speaker.

- 2023.11.30: Naver Tech Talk. Invited Speaker.

- 2023.11.28: IBK Changgong Demo Day. Keynote Speaker.

- 2023.11.14: NRC-KAIST Symposium. Keynote Speaker.

- 2023.11.09: Samsung Electronics. Invited Speaker.

- 2023.11.06: KAIST Software Graduate Program Seminar (KTP966). Invited Speaker.

- 2023.10.29-11.01: UIST 2023. San Francisco, USA.

- 2023.10.26: CS-525 Online Learning Infrastructures by Neil Heffernan @ WPI. Guest Lecturer.

- 2023.10.05: CXI Speakers Series @ Samsung Electronics. Invited Speaker.

- 2023.10.05: AI & Startups with LB Conference. Main Speaker.

- 2023.09.21-22: ONR Grant Prgram Review Meeting. Boston, USA.

- 2023.09.07: Mobile 360 APAC by GSMA. Invited Speaker: "The AI Era: What's Next?".

- 2023.08.26: Ringle Webinar Series on Graduate School. Invited Speaker.

- 2023.08.25: Metaverse Academy. Invited Speaker.

- 2023.08.21: KAIST Leadership Innovation Day: Generative AI + Education. Invited Speaker.

- 2023.08.17: AI Graduate School Symposium. Invited Speaker.

- 2023.08.11: LX Hausys. Invited Speaker.

- 2023.07.04: SICSS-Korea (Summer Institute in Computational Social Science,). Invited Speaker.

- 2023.05.16: Samsung Electronics. Invited Speaker.

- 2023.05.11: NIST AI Metrology Colloquium Series. Invited Speaker.

- 2023.05.10: SoC Catering Career Talk, KAIST. Invited Speaker.

- 2023.05.04: Adobe Research HCI/Viz Seminars. Invited Speaker.

- 2023.05.04: National Academy of Engineering of Korea and AI Future Forum: R&D Strategies for Overcoming the Limitations of Large AI Models. Panelist.

- 2023.04.27: KERIS AI+Education Seminar. Invited Speaker.

- 2023.04.26: ETNews Korea HCI & UI/UX Grand Summit 2023. Invited Speaker.

- 2023.04.25: Riiid Tech Talk. Invited Speaker.

- 2023.04.21: Software Convergence Symposium (SWCS) 2023. Keynote Speaker.

- 2023.03.14: The Kim Jaechul Graduate School of AI at KAIST, Colloquium. Invited Speaker.

- 2023.02.07: San Jose State University Department of Design. Invited Speaker & Guest Lecturer.

- 2023.01.10: Korea University, School of Cybersecurity. Invited Speaker.

- 2022.12.09: Stanford Seminar on People, Computers and Design. Invited Speaker.

- 2022.12.05: MIT CSAIL HCI Seminar. Invited Speaker.

- 2022.11.30: NeurIPS 2022. Keynote Speaker.

- June 20 2024 MS admissions info for fall 2025 is posted.

- Apr 21 2025 I'm looking for undergrad research interns for the summer.

- Oct 25 2024 I'm looking for undergrad research interns for the winter.

- Oct 7 2024 MS admissions info for spring 2025 is posted.

- Sep 21 2024 One EMNLP paper have been accepted. Our Interspeech paper is shortlisted for the ISCA Best Student Paper Award 2024. Our NAACL paper wins a Resource Award.

- Jul 4 2024 MS admissions info for fall 2024 is posted.

- Jun 1 2024 Three DIS papers and one Interspeech paper have been accepted.

- April 25 2024 Two KIXLAB papers at CHI 2024 won Best Paper Honorable Mention awards.

- Apr 18 2024 I'm looking for undergrad research interns for the summer.

- Mar 31 2024 Our paper "One vs. Many: Comprehending Accurate Information from Multiple Erroneous and Inconsistent AI Generations" has been accepted to FAccT 2024.

- Mar 28 2024 I'm co-organizing a workshop at EDM 2024: Leveraging Large Language Models for Next Generation Educational Technologies.

- Mar 26 2024 I'm co-organizing a workshop at L@S 2024: the second annual workshop on Learnersourcing: Student-generated Content @ Scale.

- Mar 23 2024 Our paper "EduLive: Re-Creating Cues for Instructor-Learner Interaction in Educational Live Streams with Learners' Transcript-Based Annotations" has been accepted to PACM HCI (CSCW 2024).

- Mar 22 2024 Humbled to receive Outstanding Ethics Professor Award (우수윤리교수상) from KAIST Graduate Student Association

- Mar 22 2024 Our LAK'24 paper titled "Bridging Learnersourcing and AI: Exploring the Dynamics of Student-AI Collaborative Feedback Generation" wins a Best Short Paper Award.

- Mar 14 2024 Our paper "Exploring Cross-Cultural Differences in English Hate Speech Annotations: From Dataset Construction to Analysis" has been accepted to NAACL 2024.

- Dec 14 2023 Eight papers are conditionally accepted to CHI 2024.

- Dec 14 2023 FLASK is accepted to ICLR 2024 as Spotlight.

- Dec 14 2023 Four papers are accepted to IUI 2024.

- Dec 12 2023 I received a KAIST Global Research Cooperation Award.

- Dec 5 2023 I'm co-organizing a CHI 2024 workshop: "HEAL: Human-centered Evaluation and Auditing of Language Models".

- Dec 2 2023 Our paper "Is the Same Performance Really the Same?: Understanding How Listeners Perceive ASR Results Differently According to the Speaker's Accent" has been accepted to PACM HCI (CSCW 2024).

- Dec 1 2023 One LAK'24 short paper has been accepted.

- Oct 23 2023 I'm looking for undergrad research interns for the winter.

- Oct 23 2023 I am appointed as an Educational Innovation Advisor at KAIST, with the mission to promote AI-applied teaching and learning in the university.

- Oct 13 2023 MS admissions info for spring 2024 is posted.

- Sep 19 2023 Our papers "CodeTree: A System for Learnersourcing Subgoal Hierarchies in Code Examples" and "ReSPect: Enabling Active and Scalable Responses to Networked Online Harassment" are accepted to PACM HCI (CSCW 2024).

- Sep 1 2023 I received tenure from KAIST.

- Aug 6 2023 Our paper "Cells, Generators, and Lenses: Design Framework for Object-Oriented Interaction with Large Language Models" is accepted to UIST 2023.

- Jul 16 2023 Our paper "EmphasisChecker: A Tool for Guiding Chart and Caption Emphasis" is accepted to IEEE VIS 2023.

- Apr 25 2023 I'm looking for undergrad research interns for the summer.

- Mar 7 2023 Excited to serve on the CSCW Steering Committee

- Jan 17 2023 KIXLAB has six papers accepted to CHI 2023.

- Jan 14 2023 Video of my recent talks on "Interaction-Centric AI": (1) NeurIPS 2022 keynote (targeted at AI audience), (2) Stanford HCI Seminar (targeted at HCI audience)

- Jan 3 2023 Our paper "Large-scale Text-to-Image Generation Models for Visual Artists' Creative Works" is accepted to IUI 2023.

- Dec 22 2022 Honored to receive a 2022 KIISE/IEEE-CS Young Computer Researcher Award (2022 젊은정보과학자상), awarded to an outstanding computing researcher who is 40 or under each year.

- Dec 6 2022 Honored to receive a Creative Education Award at Q-Day KAIST for my contribution to the design and creation of the interdisciplinary STAR courses.

- Educational Innovation Advisor at KAIST

- CSCW Steering Committee

- Subcommittee Co-Chair: CHI 2022-2023

- Program Committee: CHI 2018-2019 & 2021, AAAI 2021 (SPC), CSCW 2017-2019, Learning at Scale 2015-2020, TheWebConf 2022, SIGCSE 2022, HCOMP 2016 & 2020, Creativity & Cognition 2019, Collective Intelligence 2019, UIST 2017, CHI 2015 WIP

- Demos Co-Chair: CSCW 2022

- Late-Breaking Work Co-Chair: CHI 2021

- Editor: UX of AI Forum, ACM Interactions Magazine. 2019-2022

- Associate Editor: IEEE TLT. 2020-current

- Member: SIGCHI Asian Development Committee. 2019-2021

- Member: SIGCHI Nomination Committee. 2020

- Vice President & Advisory Board: SIGCHI Korea Chapter. 2019-current

- Vice President: HCI Society of Korea. 2019

- Papers Co-Chair: CSCW 2020

- Best Papers Co-Chair: CSCW 2019

- Social Media Co-Chair: CHI 2020

- Publicity Co-Chair: ISS 2019

- Best Paper Committee: CHI 2018, CSCW 2018

- Doctoral Consortium Co-Chair: HCI Korea 2018-2019

- Demo Co-Chair: UIST 2017-2018

- Poster Co-Chair: UIST 2015-2016

- Workshop Organizer: Crowdsourcing Law and Policy (CSCW 2017), Connecting Collaborative & Crowd Work with Online Education (CSCW 2015), CrowdCamp (HCOMP 2013-2014)

- Webmaster: CHI 2015

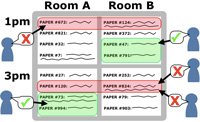

- Scheduling + Communitysourcing: CHI 2013-2015, CSCW 2014-2015

- Fall 2023 [CS492G] Human-AI Interaction

- Fall 2023 [CS473] Introduction to Social Computing

- Spring 2023 [CS374] Introduction to HCI

- Fall 2021 [CS473] Introduction to Social Computing

- Fall 2021 [CoE491] AI for Smart Life

- Spring 2021 [CS492E] Human-AI Interaction

- Spring 2021 [CS374] Introduction to HCI

- Fall 2020 [CS473] Introduction to Social Computing

- Fall 2020 [CS492F] Human-AI Interaction

- Spring 2020 [CS492D] Introduction to Research

- Spring 2020 [CS374] Introduction to HCI

- Fall 2019 [CS473] Introduction to Social Computing

- Spring 2019 [CS492C] Introduction to Research

- Spring 2019 [CS374] Introduction to HCI

- Fall 2018 [CS473] Introduction to Social Computing

- Fall 2018 [CS492C] Introduction to Research

- Fall 2018 [CS408F] Computer Science Project

- Spring 2018 [CS374] Introduction to HCI

- Spring 2018 [CS408E] Computer Science Project

- Fall 2017 [CS492] Crowdsourcing and Social Computing

- Spring 2017 [CS374] Introduction to HCI

- Spring 2017 [CS966/986] SoC Colloquium

- Fall 2016 [CS492] Crowdsourcing

- Assistant professor in the School of Computing at KAIST

- Visiting assistant professor of Computer Science and Brown Fellow at Stanford University, working in the Brown Institute and the HCI Group

- Ph.D. in EECS from MIT. Worked in the UID Group @ CSAIL

- Research intern at Microsoft Research

- Research intern at edX Learning Sciences

- Research intern at Abobe's Creative Technologies Lab

- Research intern @ USER Group at the IBM Almaden Research Center

- M.S. in Computer Science from Stanford University. Worked in the HCI Group

- Product manager / embedded software engineer at SystemBase in Seoul, Korea

- B.S. in Computer Science and Engineering from Seoul National University